PSM Tutorial #2-4 : Vertex Buffers

2013-02-13

In the previous tutorial we went over the concepts of texture coordinates. Now we only have one major topic to go over before wrapping everything up. Vertex Buffers.

Now we will look at the next set of declarations in the code. As always I will start by including the full code sample.

public class AppMain

{

static protected GraphicsContext graphics;

static ShaderProgram shaderProgram;

static Texture2D texture;

static float[] vertices=new float[12];

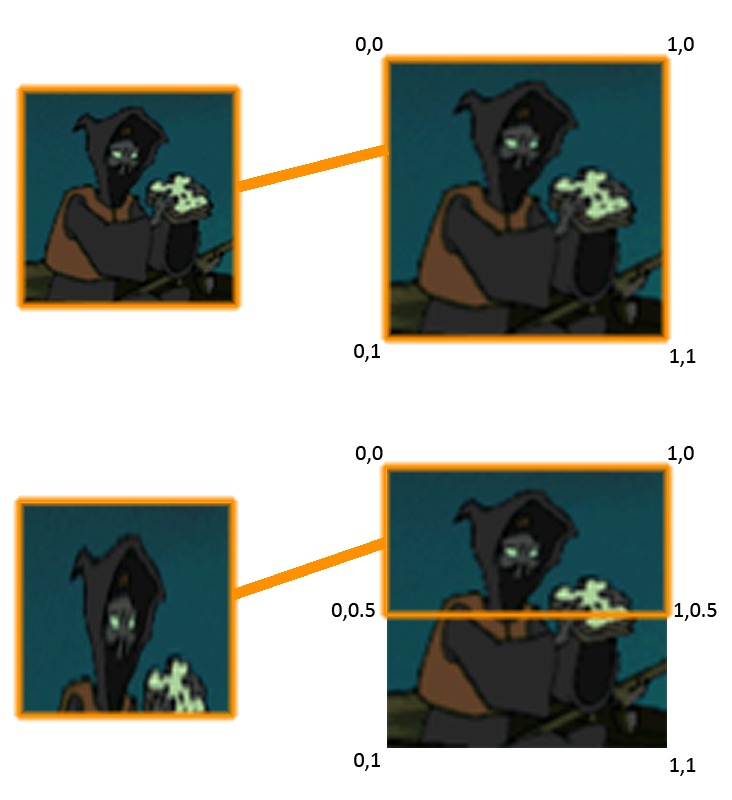

static float[] texcoords = {

0.0f, 0.0f,// 0 top left.

0.0f, 1.0f,// 1 bottom left.

1.0f, 0.0f,// 2 top right.

1.0f, 1.0f,// 3 bottom right.

};

static float[] colors = {

1.0f,1.0f,1.0f,1.0f,// 0 top left.

1.0f,1.0f,1.0f,1.0f,// 1 bottom left.

1.0f,1.0f,1.0f,1.0f,// 2 top right.

1.0f,1.0f,1.0f,1.0f,// 3 bottom right.

};

const int indexSize = 4;

static ushort[] indices;

static VertexBuffer vertexBuffer;

// Width of texture.

static float Width;

// Height of texture.

static float Height;

static Matrix4 unitScreenMatrix;

public static void Main (string[] args)

{

Initialize ();

while (true) {

SystemEvents.CheckEvents ();

Update ();

Render ();

}

}

public static void Initialize ()

{

graphics = new GraphicsContext();

ImageRect rectScreen = graphics.Screen.Rectangle;

texture = new Texture2D("/Application/resources/Player.png", false);

shaderProgram = new ShaderProgram("/Application/shaders/Sprite.cgx");

shaderProgram.SetUniformBinding(0, "u_WorldMatrix");

Width = texture.Width;

Height = texture.Height;

vertices[0]=0.0f;// x0

vertices[1]=0.0f;// y0

vertices[2]=0.0f;// z0

vertices[3]=0.0f;// x1

vertices[4]=1.0f;// y1

vertices[5]=0.0f;// z1

vertices[6]=1.0f;// x2

vertices[7]=0.0f;// y2

vertices[8]=0.0f;// z2

vertices[9]=1.0f;// x3

vertices[10]=1.0f;// y3

vertices[11]=0.0f;// z3

indices = new ushort[indexSize];

indices[0] = 0;

indices[1] = 1;

indices[2] = 2;

indices[3] = 3;

//vertex pos, texture, color

vertexBuffer = new VertexBuffer(4, indexSize, VertexFormat.Float3, VertexFormat.Float2, VertexFormat.Float4);

vertexBuffer.SetVertices(0, vertices);

vertexBuffer.SetVertices(1, texcoords);

vertexBuffer.SetVertices(2, colors);

vertexBuffer.SetIndices(indices);

graphics.SetVertexBuffer(0, vertexBuffer);

unitScreenMatrix = new Matrix4(

Width*2.0f/rectScreen.Width,0.0f, 0.0f, 0.0f,

0.0f, Height*(-2.0f)/rectScreen.Height,0.0f, 0.0f,

0.0f, 0.0f, 1.0f, 0.0f,

-1.0f, 1.0f, 0.0f, 1.0f

);

}

public static void Update ()

{

}

public static void Render ()

{

graphics.Clear();

graphics.SetShaderProgram(shaderProgram);

graphics.SetTexture(0, texture);

shaderProgram.SetUniformValue(0, ref unitScreenMatrix);

graphics.DrawArrays(DrawMode.TriangleStrip, 0, indexSize);

graphics.SwapBuffers();

}

}

Now as we browse past the declarations covered in the previous tutorials we arrive at the vertex buffer declaration:

static VertexBuffer vertexBuffer;

In OpenGL there are numerous ways to send data to the GPU to be drawn on the screen. The modern way of doing this is via vertex buffers.

A vertex buffer is a contiguous stream of data that you send to the GPU from your program. The data structure used for storing this data is an array. In plain OpenGL you setup an array of data then call a function that tells OpenGL which array to stream into memory along with some other information such as the size of the array and type of data it represents.

In PSM it’s essentially the same but Sony provides a VertexBuffer object that encapsulates a lot of the common functionality you tend to use with a vertex buffer. We can define a vertex buffer that associates vertex, UV, and color data with the same object. You can also associate other types of data if you wish to do so. Sony also specifically takes in the index buffer size in the VertexBuffer constructor.

So lets take a look at the code where we setup out vertex buffers.

//vertex pos, texture, color

vertexBuffer = new VertexBuffer(4, indexSize, VertexFormat.Float3, VertexFormat.Float2, VertexFormat.Float4);

vertexBuffer.SetVertices(0, vertices);

vertexBuffer.SetVertices(1, texcoords);

vertexBuffer.SetVertices(2, colors);

vertexBuffer.SetIndices(indices);

graphics.SetVertexBuffer(0, vertexBuffer);

First we create out VertexBuffer object. In the constructor we first pass the number of vertices we will be sending to the GPU. We then pass the number of indices we will be passing. After the first 2 arguments we have a variable length of arguments we can pass to the constructor.

Variable length arguments are very similar to C’s varargs functionality. For more information about c#’s feature for non variable length arguments see this page.

http://msdn.microsoft.com/en-us/library/w5zay9db(v=vs.71).aspx

Each of the arguments for the variable portion of the parameters defines a vertex buffer. The buffer is declared by an enum that describes the format of data that will be passed in through that buffer.

//vertex pos, texture, color

vertexBuffer = new VertexBuffer(4, indexSize, VertexFormat.Float3, VertexFormat.Float2, VertexFormat.Float4);

So here we say we want to pass in 4 vertices, 4 indices (the value of indexSize at the time), a set of 3 floats per vertex, a set of 2 floats per vertex and a set of 4 floats per vertex. The reason for these sizes will be more apparent if you look the following code:

vertexBuffer.SetVertices(0, vertices);

vertexBuffer.SetVertices(1, texcoords);

vertexBuffer.SetVertices(2, colors);

vertexBuffer.SetIndices(indices);

graphics.SetVertexBuffer(0, vertexBuffer);

As you can see we use the SetVertieces method to assign which array represents which piece of data. The first coordinate is the index of the array we are passing into the VertexBuffer object. This corresponds to the order that we entered the variable length parameters in the VertexBuffer constructor. In index 0 we pass in the vertices which need 3 floats per vertex to describe. In index 1 we pass the texture coordinates which take 2 floats per vertex to describe and finally in index 2 we pass the colors which take 4 floats per vertex to describe.

Then we send our index array to the VertexBuffer via the SetIndicies method.

Finally we send the entire vertex buffer to the graphics context.

Since we covered most of the more difficult to grasp concepts in previous tutorials this one ended up being fairly short. In the next tutorial we will go over the render method and wrap up this tutorial series!

After that we can get into more interesting things like making a game

Tags: psm_tutorial